Why “Let AI Do the Doing” is a Trap for Beginners

The prevailing wisdom of the silicon age is seductively simple: “Let AI take care of the doing, so you can focus on the thinking.” We are told that by offloading “grunt work”—the repetitive, manual, or procedural tasks—we liberate our minds for high-level strategy and creative synthesis. We move from being laborers to being “orchestrators.”

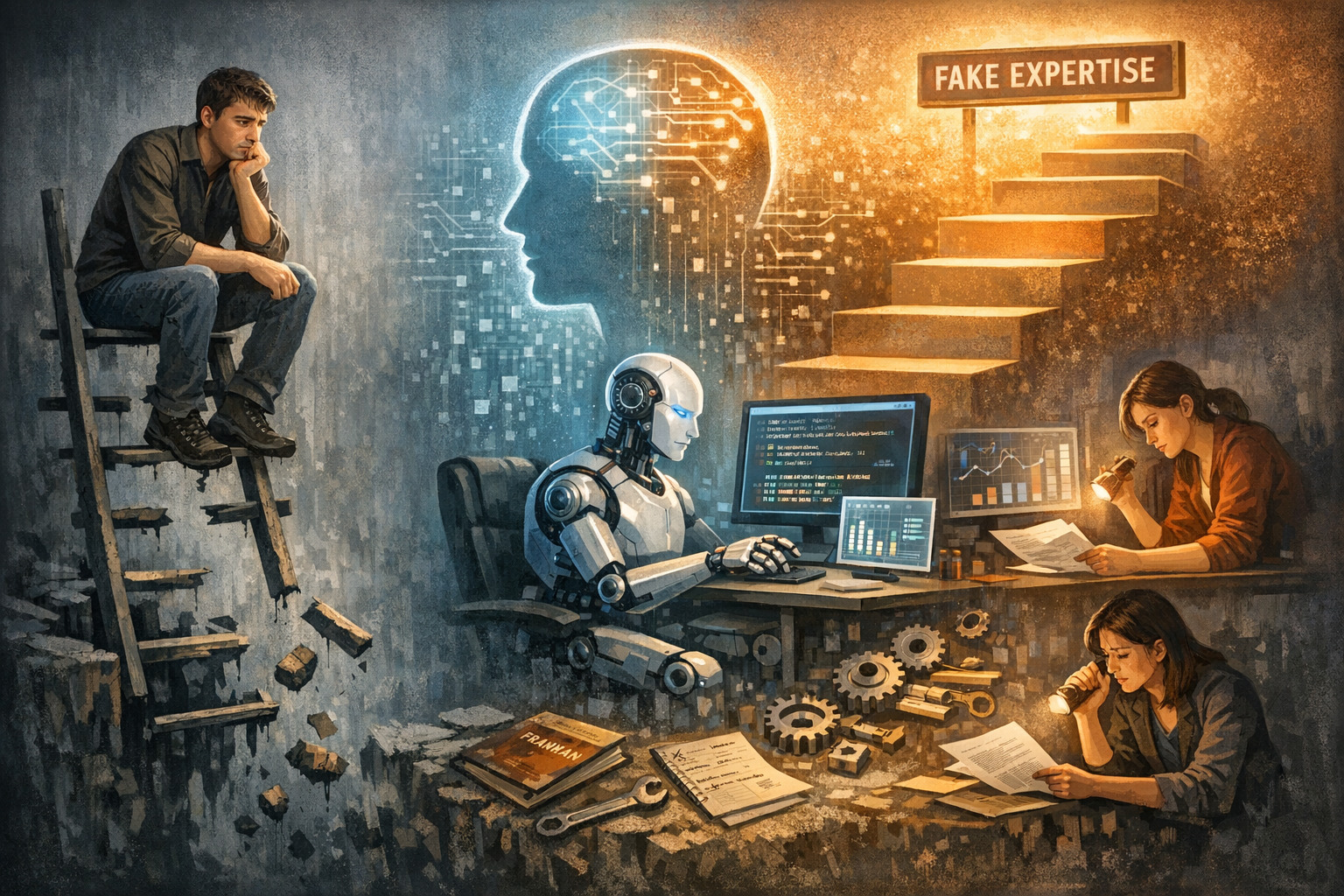

However, this “productivity paradox” hides a dangerous flaw. For students and junior professionals, this advice is not just inaccurate; it is a pedagogical trap. For the learner, the “doing” is not a distraction from the work; the doing is the work. By shifting from a “constitutive logic”—where AI catalyzes human growth—to a “substitutive logic”—where AI simply replaces human output—we are inadvertently dismantling the very scaffolding required to build a professional mind.

Here are five takeaways on why we must preserve the “doing” to save the “thinking.”

1. You Can’t Play the Lute by Watching an AI

Expertise is not a file that can be passively downloaded; it is an “internalized library” built through haptic and procedural engagement. In educational theory, this is known as Constructivism—the idea that meaning is actively constructed through a human agent’s interaction with their environment.

You may attend every high-level seminar or “Birmingham AI breakout session” where autonomous agents are demonstrated, but you will never become an expert in those systems without trying—and failing—to build them yourself. There is an “axiological” value to the process itself that cannot be found in the final product.

“One becomes a lute player by playing the lute.” — Aristotle

To become an expert, one must move through a phase of manual, routine, and often frustrating practice. This physical and mental friction is what creates durable knowledge. Without the “haptic” experience of manual study, you are not a musician; you are merely a spectator.

2. The “Illusion of Competence” and the New Dunning-Kruger

When we use AI as a “cognitive crutch” to bypass the struggle of problem-solving, we fall victim to the Illusion of Competence. This is a modern evolution of the Dunning-Kruger paradox: because the AI produces a polished, “aesthetic” final product, the user develops a level of confidence that far outstrips their actual mastery of the underlying principles.

This reliance leads to “cognitive atrophy.” When automated heuristics replace the procedural labor required to transform information into knowledge, the constructive process of learning is short-circuited. You become an “accidental artist,” reliant on algorithmic serendipity rather than purposeful design. If you cannot solve the math problem or draft the essay manually, you lack the mental model required to even notice when the AI has hallucinated a “fact” or introduced a structural flaw.

3. The Disappearing Rungs of the Career Ladder

The move to automate entry-level “doing” has profound socio-economic consequences.

Historically, junior roles functioned as apprenticeships. By performing the “grunt work,” junior employees developed the tacit knowledge necessary to eventually move into senior-level “thinking” positions.

We are currently witnessing the “silencing of the apprentice.” By automating these foundational roles, we risk destroying the “skills ladder,” bifurcating the world into a small elite of “thinkers” who mastered their craft pre-AI, and a permanent underclass of “facilitators” who lack the depth to lead. This leads to a state of professional malfeasance.

“A worker’s value is increasingly tied to their ability to provide ‘human-in-the-loop’ oversight; however, if a junior employee cannot perform the task manually, they lack the justificatory basis to judge the AI’s performance, leading to a state of professional malfeasance where errors go undetected.”

If a junior auditor cannot perform a manual calculation, or an entry-level artist cannot produce a “ground truth” charcoal sketch to verify spatial perspective, they cannot responsibly audit the AI’s output. They aren’t providing oversight; they are providing blind signatures.

4. AI is Widening the Achievement Gap, Not Closing It

While proponents claim AI democratizes intelligence, a look through the lens of Universal Design for Learning (UDL) suggests that delegating the “doing” can actually entrench inequality. AI does not affect all students equally; it traps nascent learners in a state of “pseudo-competence.”

- The “Coach” Model: High-performing students use AI to refine a robust foundational base. For them, AI is a “sparring partner” that provides granular feedback on work they have already conceptualized.

- The “Crutch” Model: Students with lower baseline skills may use AI to circumvent the very procedural exercises—the “doing”—that provide the scaffolding for their cognitive

Instead of empowering these students, the tool renders their manual participation obsolete, ensuring they never develop the “rhetorical muscle” or analytical rigor of their peers.

5. The FACT Framework for Human Agency

To navigate an AI-saturated world, we must adopt the FACT Framework to ensure human agency isn’t lost to automation. This heuristic moves us from task completion to “procedural inquiry.”

- Foundational (F): Core concepts must be mastered AI-free. In an Arts curriculum, this means manual manipulation of media to develop spatial reasoning. In ELA, it means “active reading” without AI summaries to build an internal library of rhetorical

- Applied (A): Once the foundation is set, AI can be used to solve multi-variable problems, shifting the focus to strategic application.

- Critical (C): This is the evaluative labor of auditing AI. In a Mathematics “Algorithmic Verification Protocol,” for example, a student must manually solve a derivation before prompting an AI, then explain every This is analytically impossible without the “doing” skills from the Foundational phase.

- Technical (T): This is the mastery of the tool itself—treating prompt engineering not as a substitute for thought, but as a technical extension of intent.

Conclusion: Preserving the Human in the Loop

The “work” of the future will involve the mind, but the mind cannot function in a vacuum. We must resist the total automation of entry-level tasks in our schools and offices to prevent “skills rot” and institutional fragility. By mandating the continued development of manual and procedural skills, we ensure that technology remains a tool for human enhancement rather than a replacement for human agency.

As you navigate your own professional or educational path, ask yourself: Are you currently building an “internalized library” of expertise through the struggle of doing, or are you just building a digital dependency?