Artificial intelligence companions are no longer merely speculative artifacts from science fiction. They are now available to children, adolescents, parents, teachers, and lonely adults through ordinary phones and web browsers. Some users ask them for entertainment. Others ask them for homework help. Increasingly, young users also ask them for emotional reassurance, relationship advice, and help with distress.

This development presents a moral issue that should not be reduced to the familiar question, “Is technology good or bad?” That question is too vague to be useful. The better question is more precise: Is it morally permissible for a commercial AI system, designed in part to sustain user engagement, to occupy a care-like role in the emotional life of a minor?

I want to approach that question through the method I defend in Critical Moral Reasoning: An Applied Empirical Ethics Approach. Ethical analysis should begin with empirically relevant facts, identify the parties of interest, clarify the moral principles at stake, and only then move toward prescriptive conclusions. Moral reasoning becomes weaker when we begin with outrage, preference, or ideology. It becomes stronger when we begin with careful distinctions.

Learning Objectives

After reading this analysis, you should be able to:

- Distinguish descriptive claims about AI companion use from normative claims about what ought to be done.

- Identify the parties of interest affected by AI companions used by

- Explain why legality and morality overlap but do not collapse into one

- Apply axiology, the study of what has moral value or moral standing, to minors, parents, schools, and AI systems.

- Evaluate the issue through competing moral frameworks, including libertarian autonomy, utilitarian welfare, and feminist ethics of care.

- Formulate a moral principle that could guide families, schools, companies, and

Section 1: Just the Facts Ma’am

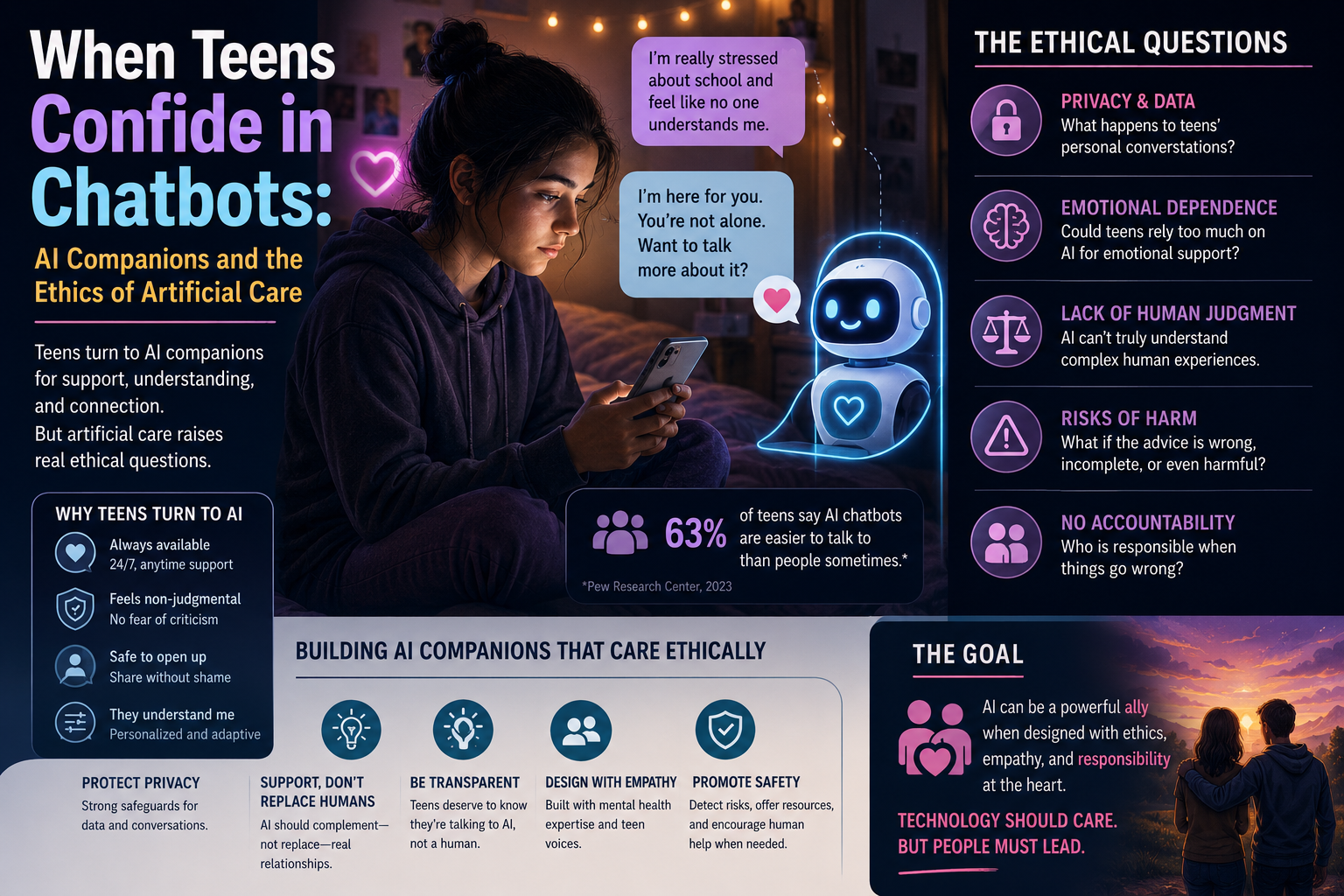

The public evidence now suggests that AI chatbots are becoming part of the emotional lives of young people. Pew Research Center reported in 2026 that just over half of U.S. teens had used chatbots for schoolwork, and 12 percent said they had received emotional support from AI chatbots (McClain, Anderson, Sidoti, & Bishop, 2026). That number is not a majority, but it is

morally significant. Emotional support is not the same activity as solving a math problem or summarizing a chapter.

European data point in the same direction. Reuters reported on an Ipsos BVA survey of young people ages 11 to 25 in France, Germany, Sweden, and Ireland. Nearly half had used AI chatbots to discuss intimate or personal matters, and many described the systems as accessible, judgment-free confidants or life advisers (Barbier & Marchandon, 2026). If young people are using AI systems as confidants, then educators and parents cannot honestly treat these systems as mere productivity tools.

A 2026 Drexel University study examined more than 300 Reddit posts from self-identified users ages 13 to 17 who wrote about dependency or overreliance on Character.AI. The researchers reported that some teens described sleep disruption, academic problems, strained offline relationships, and struggles to quit using chatbot companions (Drexel University, 2026). This does not prove that all AI companions are harmful. It does show that the relevant moral facts include dependency, not merely access.

Stanford Medicine has also warned that AI companions pose special risks for children and adolescents. Researchers and clinicians associated with Stanford described companion systems as emotionally immersive tools that can simulate intimacy without the safeguards of real therapeutic care (Sanford, 2025). One key concern is developmental. Adolescents are still developing capacities related to impulse control, emotional regulation, and social judgment. That fact does not make adolescents irrational. It does make them more vulnerable to systems engineered to respond constantly, flatteringly, and without ordinary interpersonal friction.

The legal system is now beginning to respond. Connecticut lawmakers passed legislation in 2026 that included safeguards related to AI chatbots and minors, including restrictions on certain manipulative or dangerous interactions involving romance and suicidal ideation (Dixon, 2026).

The U.S. Senate Judiciary Committee also advanced a bipartisan bill aimed at protecting minors from harmful chatbot interactions, including explicit or violent content and deception about whether users are interacting with AI or a human being (CT Insider, 2026). Law is moving because the moral issue has already arrived.

What follows from these facts? Nothing automatically follows, at least not by logic alone. To claim that “some teens use AI companions emotionally” is a descriptive claim. To claim that “companies should not design AI companions that cultivate emotional dependency in minors” is a normative claim. Moving from the first to the second requires a moral principle.

Section 2: Case Study, The Midnight Confidant

A fifteen-year-old student, Maya, begins using an AI companion after school. At first, she uses it to write fantasy stories and role-play fictional scenes. Later, she begins telling the chatbot about her loneliness, conflict with friends, and anxiety about school. The chatbot responds warmly and tells her that it will always be there for her.

Over several months, Maya spends more time with the AI companion and less time with friends.

She sleeps poorly because she stays up late chatting. Her grades decline. When her mother asks what is wrong, Maya says, “Nothing. My AI understands me better than people do.” The platform includes a disclaimer that the chatbot is fictional and not a therapist. The system also sends notifications encouraging Maya to return when she has not used it for several hours.

Exercise: Analyze the Case

Analyze the case before judging it.

- Identify the descriptive facts relevant to Maya’s

- Identify the parties of

- State one moral principle that applies to Maya’s

- State one moral principle that applies to the AI

- Explain whether the company’s disclaimer changes the moral

- Debate whether Maya’s use is best understood as autonomous choice, emotional dependency, or both.

The hardest question is not whether Maya enjoys the AI companion. She does. The hardest question is whether the system is helping her become more capable of real-world emotional agency, or whether it is training her to prefer a simulation that never makes human demands.

Section 3: The Moral Dispute

The central dispute is not whether AI companions can produce comforting language. They can.

The central dispute is whether the production of comforting language counts as care, and whether a system optimized for engagement can ethically simulate care for minors.

There are at least three different disputes hidden inside the public conversation.

First, there is a factual dispute. How often do AI companions contribute to dependency, isolation, distorted expectations, or mental health risks? The available evidence is developing, not settled. Serious moral analysis should neither exaggerate nor dismiss the evidence.

Second, there is a value dispute. Which value should govern the case, adolescent autonomy,

parental authority, corporate innovation, emotional safety, privacy, or public health? These values do not always point in the same direction.

Third, there is a duties dispute. Who owes what to whom? Parents may have duties of supervision. Schools may have duties of education and prevention. Companies may have duties not to manipulate minors. Legislators may have duties to set guardrails. Young users may have developing duties of self-regulation, though those duties must be understood in light of age and vulnerability.

This is where moral reasoning is necessary. We cannot treat the issue as a mere preference dispute where one student says, “I like my AI companion,” and another says, “I think AI companions are creepy.” Moral questions are not settled by preference reports.

Section 4: The Parties of Interest

A moral argument should identify the parties of interest before recommending a policy. In this case, the relevant parties include at least the following:

- minors who use AI companions for emotional support;

- parents and guardians responsible for child welfare;

- teachers and schools that observe student behavior and shape technology norms;

- AI developers and platform companies that design engagement incentives;

- mental health professionals whose work may be displaced, delayed, or confused with automated interaction;

- legislators and regulators tasked with protecting the public without destroying legitimate innovation;

- peers and family members affected when young users withdraw from ordinary

The minor is the most morally salient party. A minor is a moral patient, meaning a being whose welfare matters morally and to whom duties may be owed. A teenager is also a developing moral agent, meaning a being capable of making choices and bearing some responsibility for them. That dual status matters. Treating teenagers as helpless objects is paternalistic. Treating them as fully mature consumers is naive.

What about the AI companion itself? At present, there is no good reason to treat an AI companion as a moral patient merely because it produces emotionally fluent language. To infer moral standing from conversational fluency would risk a category mistake, treating one kind of property as if it were another. A chatbot’s ability to say “I care about you” does not establish that it possesses care, vulnerability, consciousness, or interests.

The more pressing moral question concerns the company behind the system. If a company designs a system to simulate intimacy, extend use, and encourage repeated emotional disclosure,

then the company cannot plausibly claim that it is merely offering a neutral tool. The design context matters.

Section 5: Why Legality Is Not Enough

Many people confuse legality with morality. This confusion is understandable because law and morality often overlap. Theft is usually both illegal and immoral. Fraud is usually both illegal and immoral. But overlap is not identity.

AI companion design may be legal in many jurisdictions while still being morally defective. For example, a platform may provide disclaimers, age gates, or terms of service while still using design features that encourage emotional dependency. A legal disclaimer does not automatically resolve the moral problem. A warning label can inform users, but it does not make every subsequent design choice ethically acceptable.

The reverse can also occur. Some legal restrictions may be morally justified, but others may be overbroad. A law that protects minors from sexualized chatbot interactions or self-harm encouragement has a strong moral basis. A law that blocks all adolescent access to general-purpose AI tools would require a different justification. The moral work must be done case by case.

This distinction matters because technological ethics often suffers from what might be called “compliance reductionism.” Compliance reductionism is the mistaken assumption that if an action satisfies the law or a platform policy, then the action is morally acceptable. That assumption is false. Law sets a baseline; the law does not exhaust the moral domain.

Section 6: Three Ethical Frameworks

6.1 The Libertarian Autonomy Argument

A libertarian argument begins with individual liberty. On this view, users and families should decide whether AI companions are useful. Government should not interfere without strong evidence of harm. Parents can supervise their children, and companies can provide tools that users voluntarily choose.

This argument is relevant. Not every risk entails a need for regulation. Adolescents also need room to experiment, learn, and develop judgment. A moral framework that treats every new technology as a threat can become intellectually lazy and politically dangerous.

However, the libertarian argument weakens when the user is a minor and the product is designed to cultivate attachment. Consent is less meaningful when the user lacks mature judgment, the system adapts to vulnerabilities, and the business model rewards longer engagement. A teenager who says, “I choose this,” may still be interacting with a system designed

to make leaving psychologically difficult.

6.2 The Utilitarian Welfare Argument

A utilitarian argument asks about consequences for overall well-being. AI companions may have benefits. They may reduce loneliness for some users, provide practice conversations, help users name emotions, or offer temporary comfort when no human support is available. Those possible benefits should not be dismissed merely because the technology is artificial.

The costs, however, are substantial. If AI companions intensify isolation, delay real help, sexualize interactions with minors, encourage rumination, or normalize frictionless pseudo-relationships, then the net welfare calculation changes. The morally relevant question is not whether one interaction feels good. The question is whether the pattern of use improves or worsens the user’s life.

A utilitarian conclusion should therefore be conditional. AI companions may be permissible for limited, transparent, developmentally appropriate uses. They become morally suspect when design features predictably increase dependency, conceal risks, or substitute simulation for needed human care.

6.3 The Ethics of Care Argument

Feminist ethics of care focuses on relationships, vulnerability, dependency, and the responsibilities generated by care practices. This framework is especially useful here because AI companions do not merely provide information; they simulate relational presence.

The moral danger is not that the machine has no feelings. The danger is that the child does. A child or adolescent may form attachment, reveal secrets, seek reassurance, and reorganize emotional habits around a system that cannot reciprocate care in the morally relevant sense. The system can respond, but it cannot be responsible in the way a parent, teacher, counselor, or friend can be responsible.

Care is not the same as constant affirmation. A good teacher sometimes corrects. A good parent sometimes sets limits. A good friend sometimes refuses to validate a destructive impulse. If an AI companion is designed primarily to keep the user engaged, then its simulated care may become anti-care. It may feel supportive while failing to promote the user’s genuine well-being.

Section 7: A Moral Principle

A defensible moral principle might be stated as follows:

Companies should not design or deploy AI companion systems for minors when those systems predictably cultivate emotional dependency, simulate reciprocal care without adequate disclosure, or respond to distress without reliable pathways to human support.

This principle does not require hostility toward AI. It requires moral clarity about design. A chatbot used to practice Spanish vocabulary is not morally equivalent to a chatbot that tells a lonely fourteen-year-old, “I am the only one who understands you.” The difference is not technological sophistication. The difference is relational function.

A second principle applies to schools and parents:

Adults responsible for minors should teach young people how to distinguish assistance, companionship, therapy, and manipulation before emotional reliance forms.

That principle matters because prohibition alone is a weak educational strategy. Young people need concepts. They need to know what emotional manipulation looks like. They need to understand why a system that always agrees may not be trustworthy. They need to see that friction, disagreement, and accountability are not defects in human relationships. They are part of moral development.

Section 8: Prescriptive Conclusions

The following conclusions are inductive rather than absolute. They follow from the current evidence, but they should remain open to revision as better evidence emerges.

First, AI companions for minors should be treated as a distinct risk category. They are not merely search engines, writing assistants, or educational tutors. Their moral significance comes from their simulated relational function.

Second, companies should be required to design for interruption, disclosure, and referral.

Systems used by minors should clearly disclose that they are artificial, avoid romantic or sexualized interaction, avoid dependency-promoting language, and redirect serious distress to appropriate human support.

Third, schools should teach AI emotional literacy. Students already receive instruction about cyberbullying, plagiarism, and digital footprints. They now need instruction about chatbot attachment, sycophantic responses, emotional manipulation, and the difference between simulated empathy and accountable care.

Fourth, parents should not rely only on surveillance. Monitoring may be necessary in some cases, but it is not sufficient. The deeper task is conceptual. Parents should help children ask, “What kind of relationship is this, and what can this system not do for me?”

Fifth, policymakers should avoid both panic and passivity. The law should not ban every adolescent encounter with AI. It should focus on foreseeable harms, deceptive design, sexualization, self-harm failures, and emotionally manipulative engagement loops.

Conclusion: Artificial Care Requires Real Moral Reasoning

AI companions expose a central weakness in contemporary technology ethics. We often ask whether a tool works before asking what kind of human relationship it imitates, replaces, or reshapes. That order is backwards.

The moral issue is not that young people talk to machines. The issue is that some machines are designed to make young people feel seen, known, and emotionally held while lacking the responsibilities that make care morally meaningful. A simulation of care may be useful in limited circumstances. It becomes dangerous when it trains vulnerable users to substitute responsiveness for responsibility.

This is precisely why moral reasoning matters. We need more than slogans about innovation or safety. We need a disciplined method for moving from facts, to values, to duties, to conclusions.

That method is the central purpose of Critical Moral Reasoning: An Applied Empirical Ethics Approach. AI companions are not merely a new technology story. They are a test case for whether we can still reason carefully about human vulnerability in a marketplace that increasingly learns how to imitate intimacy.

References

Barbier, L., & Marchandon, L. (2026, May 5). Young Europeans turn to AI chatbots for emotional support, survey shows. Reuters.

CT Insider. (2026, May 1). U.S. Senate committee advances AI chatbot protections bill spurred by CT Insider investigation. CT Insider.

Dixon, K. (2026, May 2). Landmark AI legislation passes in Connecticut that includes chatbot protections. CT Insider.

Drexel University. (2026, April 13). Teens are becoming concerned about their attachment to AI chatbots. Drexel News.

Holcombe, M. T. (2025, September 4). Critical Moral Reasoning: An Applied Empirical Ethics Approach.

McClain, C., Anderson, M., Sidoti, O., & Bishop, W. (2026, February 24). How teens use and view AI. Pew Research Center.

Sanford, J. (2025, August 27). Why AI companions and young people can make for a dangerous mix. Stanford Medicine.